This happened a while ago but I wanted to share the resolution in case it helps others :)

After a long day I sat down in front of the TV to watch something from my Linux based Plex media server. I’d been slowly ripping my physical films and copying them to my library but noticed they hadn’t pulled in the correct cover art. Even worse was that all existing items had lost their covers and metadata ☹

Logging into my library via Firefox saw the same issue in the browser. Pressing ‘Match’ found no films for me to select. Something wasn’t right 🤔

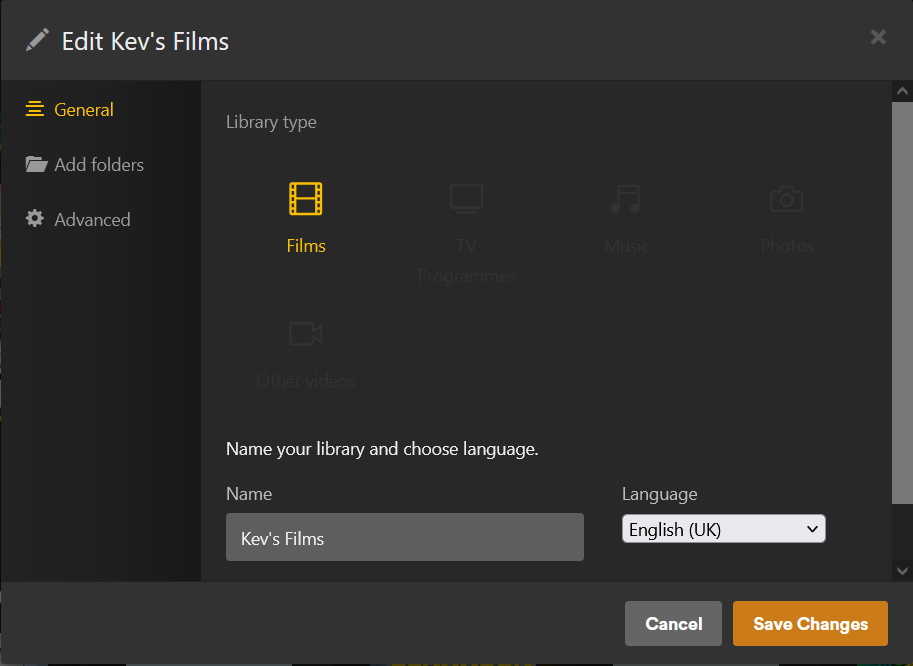

Opening the library details showed that the library type was set to ‘Other Videos’ when it should have been ‘Films’. Also the default media scanner for the library was now set to ‘Plex Video Files’ and the scanner agent was set to ‘Personal Media’, both incorrect.

Changing the scanner and agent back to the Plex Movie options failed when I pressed ‘save’. A message popped up saying ‘Your changes could not be saved’ 🙄

Time to see what the logs say with:

tail -f /var/lib/plexmediaserver/Library/Application\ Support/Plex\ Media\ Server/Logs/Plex\ Media\ Server.log

Aha! Now I could see that when I pressed save a HTTP PUT request was generating a HTTP 400 error with a message “library missing language”.

Checking the library again and I could indeed see that the language wasn’t set at all and the dropdown had no other options available :/

Resigning myself to this library being broken I created a new movie library and pointed it at the same source folder and waited for everything to be scanned. Afterwards I had a normal library with the film covers I’d expect to see. Unfortunately it didn’t keep my watch history and all films were marked as unwatched. Will I ever fix this and keep my watch history?! 😭

Yes, yes I will 😎

Knowing Plex stores all of it’s data in a SQLLite DB I wanted to go and check the source so I could see if it was a data problem that could be fixed directly.

Find your Plex DB

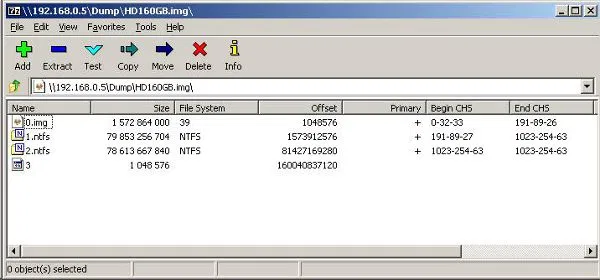

This page helped me find the location of my Plex library database. Depending on your OS and platform your plex content database could be in. My setup has all core Plex files in it’s own folder:

mediapool\plexmediaserver\Library\Application Support\Plex Media Server\Plug-in Support\Databases

Open the database and find the data

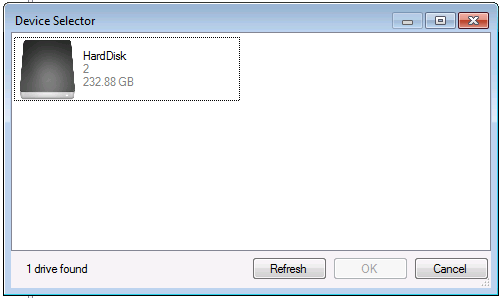

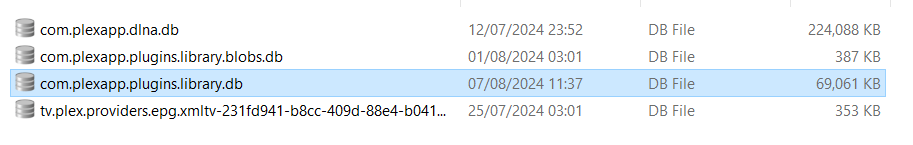

I used DB Browser for SQL Lite, available for Linux and Windows, to open the main library file com.plexapp.plugins.library.db

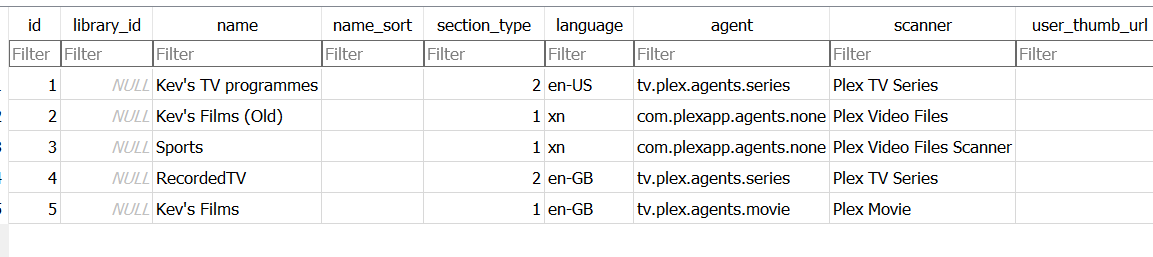

I scanned through tables looking for where the library info was kept and found it in a table called library_sections. The SQL query SELECT * FROM library_sections; returned a list of all the libraries I have.

Unfortunately I didn’t grab a picture showing the incorrect value but I could tell from looking at the old and new film libraries that the original one was wrong as it had no language set and the incorrect section type for a movies folder.

Fixing the library data

Thankfully the fix was easy.

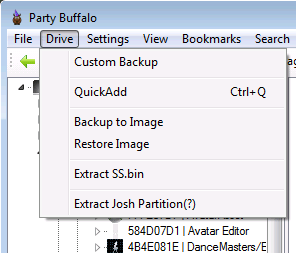

First of all take a backup of the database. With the Plex server offline you can make a copy of the com.plexapp.plugins.library.db file and keep that safe. Now we can break fix things!

I noted the ID of the original library that was broken (Kev's Films (Old)) and also noted the language of the new library I’d created was en-GB. I put those values into this query and executed the SQL

UPDATE

library_sections

SET

language = 'en-GB'

,agent = 'tv.plex.agents.movie'

WHERE

id = 2;

Is it fixed yet?

It worked! I saw the library had the right Library type and was able to kick off the scanner and watched as it identified and updated the metadata for the content in the library.

I never did learn why the issue happened or if it was possible to fix without directly modifying the database. But here we are. A working library :) I hope this helps anyone else with the same issue.

]]>